Software Engineering Tutorial Software engineering is an engineering branch associated with development of software product using well-defined scientific principles, methods and procedures. The outcome of software engineering is an efficient and reliable software product. Software Engineering gives a framework for software development that ensures quality. It is the application of a systematic and disciplined process to produce reliable and economical software. This online course covers key Software Engineering Concepts. Make notes while learning.

- Rmmm In Software Engineering Tutorial Point

- Component Based Software Engineering Tutorial Point

- Software Engineering

- Software Engineering Tutorial Ppt

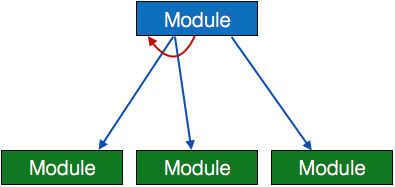

Example of a CASE tool.

Computer-aided software engineering (CASE) is the domain of software tools used to design and implement applications. CASE tools are similar to and were partly inspired by computer-aided design (CAD) tools used for designing hardware products. CASE tools are used for developing high-quality, defect-free, and maintainable software.[1] CASE software is often associated with methods for the development of information systems together with automated tools that can be used in the software development process.[2]

- 2CASE Software

History[edit]

The Information System Design and Optimization System (ISDOS) project, started in 1968 at the University of Michigan, initiated a great deal of interest in the whole concept of using computer systems to help analysts in the very difficult process of analysing requirements and developing systems. Several papers by Daniel Teichroew fired a whole generation of enthusiasts with the potential of automated systems development. His Problem Statement Language / Problem Statement Analyzer (PSL/PSA) tool was a CASE tool although it predated the term.[3]

Another major thread emerged as a logical extension to the data dictionary of a database. By extending the range of metadata held, the attributes of an application could be held within a dictionary and used at runtime. This 'active dictionary' became the precursor to the more modern model-driven engineering capability. However, the active dictionary did not provide a graphical representation of any of the metadata. It was the linking of the concept of a dictionary holding analysts' metadata, as derived from the use of an integrated set of techniques, together with the graphical representation of such data that gave rise to the earlier versions of CASE.[4]

The next entrant into the market was Excelerator from Index Technology in Cambridge, Mass. While DesignAid ran on Convergent Technologies and later Burroughs Ngen networked microcomputers, Index launched Excelerator on the IBM PC/AT platform. While, at the time of launch, and for several years, the IBM platform did not support networking or a centralized database as did the Convergent Technologies or Burroughs machines, the allure of IBM was strong, and Excelerator came to prominence. Hot on the heels of Excelerator were a rash of offerings from companies such as Knowledgeware (James Martin, Fran Tarkenton and Don Addington), Texas Instrument's CA Gen and Andersen Consulting's FOUNDATION toolset (DESIGN/1, INSTALL/1, FCP).[5]

CASE tools were at their peak in the early 1990s.[6] According to the PC Magazine of January 1990, over 100 companies were offering nearly 200 different CASE tools.[5] At the time IBM had proposed AD/Cycle, which was an alliance of software vendors centered on IBM's Software repository using IBM DB2 in mainframe and OS/2:

- The application development tools can be from several sources: from IBM, from vendors, and from the customers themselves. IBM has entered into relationships with Bachman Information Systems, Index Technology Corporation, and Knowledgeware wherein selected products from these vendors will be marketed through an IBM complementary marketing program to provide offerings that will help to achieve complete life-cycle coverage.[7]

Rmmm In Software Engineering Tutorial Point

With the decline of the mainframe, AD/Cycle and the Big CASE tools died off, opening the market for the mainstream CASE tools of today. Many of the leaders of the CASE market of the early 1990s ended up being purchased by Computer Associates, including IEW, IEF, ADW, Cayenne, and Learmonth & Burchett Management Systems (LBMS). The other trend that led to the evolution of CASE tools was the rise of object-oriented methods and tools. Most of the various tool vendors added some support for object-oriented methods and tools. In addition new products arose that were designed from the bottom up to support the object-oriented approach. Andersen developed its project Eagle as an alternative to Foundation. Several of the thought leaders in object-oriented development each developed their own methodology and CASE tool set: Jacobsen, Rumbaugh, Booch, etc. Eventually, these diverse tool sets and methods were consolidated via standards led by the Object Management Group (OMG). The OMG's Unified Modelling Language (UML) is currently widely accepted as the industry standard for object-oriented modeling.

CASE Software[edit]

A. Fuggetta classified CASE software different into 3 categories:[8]

- Tools support specific tasks in the software life-cycle.

- Workbenches combine two or more tools focused on a specific part of the software life-cycle.

- Environments combine two or more tools or workbenches and support the complete software life-cycle.

Tools[edit]

CASE tools support specific tasks in the software development life-cycle. They can be divided into the following categories:

- Business and Analysis modeling. Graphical modeling tools. E.g., E/R modeling, object modeling, etc.

- Development. Design and construction phases of the life-cycle. Debugging environments. E.g., IISE LKO.

- Verification and validation. Analyze code and specifications for correctness, performance, etc.

- Configuration management. Control the check-in and check-out of repository objects and files. E.g., SCCS, IISE.

- Metrics and measurement. Analyze code for complexity, modularity (e.g., no 'go to's'), performance, etc.

- Project management. Manage project plans, task assignments, scheduling.

Another common way to distinguish CASE tools is the distinction between Upper CASE and Lower CASE. Upper CASE Tools support business and analysis modeling. They support traditional diagrammatic languages such as ER diagrams, Data flow diagram, Structure charts, Decision Trees, Decision tables, etc. Lower CASE Tools support development activities, such as physical design, debugging, construction, testing, component integration, maintenance, and reverse engineering. All other activities span the entire life-cycle and apply equally to upper and lower CASE.[9]

Workbenches[edit]

Workbenches integrate two or more CASE tools and support specific software-process activities. Hence they achieve:

- a homogeneous and consistent interface (presentation integration).

- seamless integration of tools and tool chains (control and data integration).

An example workbench is Microsoft's Visual Basic programming environment. It incorporates several development tools: a GUI builder, smart code editor, debugger, etc. Most commercial CASE products tended to be such workbenches that seamlessly integrated two or more tools. Workbenches also can be classified in the same manner as tools; as focusing on Analysis, Development, Verification, etc. as well as being focused on upper case, lower case or processes such as configuration management that span the complete life-cycle.

Environments[edit]

An environment is a collection of CASE tools or workbenches that attempts to support the complete software process. This contrasts with tools that focus on one specific task or a specific part of the life-cycle. CASE environments are classified by Fuggetta as follows:[8]

- Toolkits. Loosely coupled collections of tools. These typically build on operating system workbenches such as the Unix Programmer's Workbench or the VMS VAX set. They typically perform integration via piping or some other basic mechanism to share data and pass control. The strength of easy integration is also one of the drawbacks. Simple passing of parameters via technologies such as shell scripting can't provide the kind of sophisticated integration that a common repository database can.

- Fourth generation. These environments are also known as 4GL standing for fourth generation language environments due to the fact that the early environments were designed around specific languages such as Visual Basic. They were the first environments to provide deep integration of multiple tools. Typically these environments were focused on specific types of applications. For example, user-interface driven applications that did standard atomic transactions to a relational database. Examples are Informix 4GL, and Focus.

- Language-centered. Environments based on a single often object-oriented language such as the Symbolics Lisp Genera environment or VisualWorks Smalltalk from Parcplace. In these environments all the operating system resources were objects in the object-oriented language. This provides powerful debugging and graphical opportunities but the code developed is mostly limited to the specific language. For this reason, these environments were mostly a niche within CASE. Their use was mostly for prototyping and R&D projects. A common core idea for these environments was the model-view-controller user interface that facilitated keeping multiple presentations of the same design consistent with the underlying model. The MVC architecture was adopted by the other types of CASE environments as well as many of the applications that were built with them.

- Integrated. These environments are an example of what most IT people tend to think of first when they think of CASE. Environments such as IBM's AD/Cycle, Andersen Consulting's FOUNDATION, the ICL CADES system, and DEC Cohesion. These environments attempt to cover the complete life-cycle from analysis to maintenance and provide an integrated database repository for storing all artifacts of the software process. The integrated software repository was the defining feature for these kinds of tools. They provided multiple different design models as well as support for code in heterogenous languages. One of the main goals for these types of environments was 'round trip engineering': being able to make changes at the design level and have those automatically be reflected in the code and vice versa. These environments were also typically associated with a particular methodology for software development. For example, the FOUNDATION CASE suite from Andersen was closely tied to the Andersen Method/1 methodology.

- Process-centered. This is the most ambitious type of integration. These environments attempt to not just formally specify the analysis and design objects of the software process but the actual process itself and to use that formal process to control and guide software projects. Examples are East, Enterprise II, Process Wise, Process Weaver, and Arcadia. These environments were by definition tied to some methodology since the software process itself is part of the environment and can control many aspects of tool invocation.

In practice, the distinction between workbenches and environments was flexible. Visual Basic for example was a programming workbench but was also considered a 4GL environment by many. The features that distinguished workbenches from environments were deep integration via a shared repository or common language and some kind of methodology (integrated and process-centered environments) or domain (4GL) specificity.[8]

Major CASE Risk Factors[edit]

Some of the most significant risk factors for organizations adopting CASE technology include:

- Inadequate standardization. Organizations usually have to tailor and adopt methodologies and tools to their specific requirements. Doing so may require significant effort to integrate both divergent technologies as well as divergent methods. For example, before the adoption of the UML standard the diagram conventions and methods for designing object-oriented models were vastly different among followers of Jacobsen, Booch, and Rumbaugh.

- Unrealistic expectations. The proponents of CASE technology—especially vendors marketing expensive tool sets—often hype expectations that the new approach will be a silver bullet that solves all problems. In reality no such technology can do that and if organizations approach CASE with unrealistic expectations they will inevitably be disappointed.

- Inadequate training. As with any new technology, CASE requires time to train people in how to use the tools and to get up to speed with them. CASE projects can fail if practitioners are not given adequate time for training or if the first project attempted with the new technology is itself highly mission critical and fraught with risk.

- Inadequate process control. CASE provides significant new capabilities to utilize new types of tools in innovative ways. Without the proper process guidance and controls these new capabilities can cause significant new problems as well.[10]

See also[edit]

References[edit]

- ^Kuhn, D.L (1989). 'Selecting and effectively using a computer aided software engineering tool'. Annual Westinghouse computer symposium; 6–7 Nov 1989; Pittsburgh, PA (U.S.); DOE Project.

- ^P. Loucopoulos and V. Karakostas (1995). System Requirements Engineerinuality software which will perform effectively.

- ^Teichroew, Daniel; Hershey, Ernest Allen (1976). 'PSL/PSA a computer-aided technique for structured documentation and analysis of information processing systems'. Proceeding ICSE '76 Proceedings of the 2nd International Conference on Software Engineering. IEEE Computer Society Press.

- ^Coronel, Carlos; Morris, Steven (February 4, 2014). Database Systems: Design, Implementation, & Management. Cengage Learning. pp. 695–700. ISBN978-1285196145. Retrieved 25 November 2014.

- ^ abInc, Ziff Davis (1990-01-30). PC Mag. Ziff Davis, Inc.

- ^Yourdon, Ed (Jul 23, 2001). 'Can XP Projects Grow?'. Computerworld. Retrieved 25 November 2014.

- ^'AD/Cycle strategy and architecture', IBM Systems Journal, Vol 29, NO 2, 1990; p. 172.

- ^ abcAlfonso Fuggetta (December 1993). 'A classification of CASE technology'. Computer. 26 (12): 25–38. doi:10.1109/2.247645. Retrieved 2009-03-14.

- ^Software Engineering: Tools, Principles and Techniques by Sangeeta Sabharwal, Umesh Publications

- ^Computer Aided Software Engineering. In: FFIEC IT Examination Handbook InfoBase. Retrieved 3 Mar 2012.

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Computer-aided_software_engineering&oldid=910966771'

A software metric is a measure of software characteristics which are quantifiable or countable. Software metrics are important for many reasons, including measuring software performance, planning work items, measuring productivity, and many other uses.

Within the software development process, there are many metrics that are all related to each other. Software metrics are related to the four functions of management: Planning, Organization, Control, or Improvement.

In this article, we are going to discuss several topics including many examples of software metrics:

- Benefits of Software Metrics

- How Software Metrics Lack Clarity

- How to Track Software Metrics

- Examples of Software Metrics

Benefits of Software Metrics

The goal of tracking and analyzing software metrics is to determine the quality of the current product or process, improve that quality and predict the quality once the software development project is complete. On a more granular level, software development managers are trying to:

- Increase return on investment (ROI)

- Identify areas of improvement

- Manage workloads

- Reduce overtime

- Reduce costs

These goals can be achieved by providing information and clarity throughout the organization about complex software development projects. Metrics are an important component of quality assurance, management, debugging, performance, and estimating costs, and they’re valuable for both developers and development team leaders:

- Managers can use software metrics to identify, prioritize, track and communicate any issues to foster better team productivity. This enables effective management and allows assessment and prioritization of problems within software development projects. The sooner managers can detect software problems, the easier and less-expensive the troubleshooting process.

- Software development teams can use software metrics to communicate the status of software development projects, pinpoint and address issues, and monitor, improve on, and better manage their workflow.

Software metrics offer an assessment of the impact of decisions made during software development projects. This helps managers assess and prioritize objectives and performance goals.

How Software Metrics Lack Clarity

Terms used to describe software metrics often have multiple definitions and ways to count or measure characteristics. For example, lines of code (LOC) is a common measure of software development. But there are two ways to count each line of code:

- One is to count each physical line that ends with a return. But some software developers don’t accept this count because it may include lines of “dead code” or comments.

- To get around those shortfalls and others, each logical statement could be considered a line of code.

Thus, a single software package could have two very different LOC counts depending on which counting method is used. That makes it difficult to compare software simply by lines of code or any other metric without a standard definition, which is why establishing a measurement method and consistent units of measurement to be used throughout the life of the project is crucial.

There is also an issue with how software metrics are used. If an organization uses productivity metrics that emphasize volume of code and errors, software developers could avoid tackling tricky problems to keep their LOC up and error counts down. Software developers who write a large amount of simple code may have great productivity numbers but not great software development skills. Additionally, software metrics shouldn’t be monitored simply because they’re easy to obtain and display – only metrics that add value to the project and process should be tracked.

How to Track Software Metrics

Software metrics are great for management teams because they offer a quick way to track software development, set goals and measure performance. But oversimplifying software development can distract software developers from goals such as delivering useful software and increasing customer satisfaction.

Of course, none of this matters if the measurements that are used in software metrics are not collected or the data is not analyzed. The first problem is that software development teams may consider it more important to actually do the work than to measure it.

Component Based Software Engineering Tutorial Point

It becomes imperative to make measurement easy to collect or it will not be done. Make the software metrics work for the software development team so that it can work better. Measuring and analyzing doesn’t have to be burdensome or something that gets in the way of creating code. Software metrics should have several important characteristics. They should be:

- Simple and computable

- Consistent and unambiguous (objective)

- Use consistent units of measurement

- Independent of programming languages

- Easy to calibrate and adaptable

- Easy and cost-effective to obtain

- Able to be validated for accuracy and reliability

- Relevant to the development of high-quality software products

This is why software development platforms that automatically measure and track metrics are important. But software development teams and management run the risk of having too much data and not enough emphasis on the software metrics that help deliver useful software to customers.

The technical question of how software metrics are collected, calculated and reported are not as important as deciding how to use software metrics. Patrick Kua outlines four guidelines for an appropriate use of software metrics:

1. Link software metrics to goals.

Often sets of software metrics are communicated to software development teams as goals. So the focus becomes:

- Reducing the lines of codes

- Reducing the number of bugs reported

- Increasing the number of software iterations

- Speeding up the completion of tasks

Focusing on those metrics as targets help software developers reach more important goals such as improving software usefulness and user experience.

Image via Wikipedia

For example, size-based software metrics often measure lines of code to indicate coding complexity or software efficiency. In an effort to reduce the code’s complexity, management may place restrictions on how many lines of code are to written to complete functions. In an effort to simplify functions, software developers could write more functions that have fewer lines of code to reach their target but do not reduce overall code complexity or improve software efficiency.

When developing goals, management needs to involve the software development teams in establishing goals, choosing software metrics that measure progress toward those goals and align metrics with those goals.

2. Track trends, not numbers.

Software metrics are very seductive to management because complex processes are represented as simple numbers. And those numbers are easy to compare to other numbers. So when a software metric target is met, it is easy to declare success. Not reaching that number lets software development teams know they need to work more on reaching that target.

These simple targets do not offer as much information on how the software metrics are trending. Any single data point is not as significant as the trend it is part of. Analysis of why the trend line is moving in a certain direction or at what rate it is moving will say more about the process. Trends also will show what effect any process changes have on progress.

The psychological effects of observing a trend – similar to the Hawthorne Effect, or changes in behavior resulting from awareness of being observed – can be greater than focusing on a single measurement. If the target is not met, that, unfortunately, can be seen as a failure. But a trend line showing progress toward a target offers incentive and insight into how to reach that target.

3. Set shorter measurement periods.

Software development teams want to spend their time getting the work done not checking if they are reaching management established targets. So a hands-off approach might be to set the target sometime in the future and not bother the software team until it is time to tell them they succeeded or failed to reach the target.

By breaking the measurement periods into smaller time frames, the software development team can check the software metrics — and the trend line — to determine how well they are progressing.

Yes, that is an interruption, but giving software development teams more time to analyze their progress and change tactics when something is not working is very productive. The shorter periods of measurement offer more data points that can be useful in reaching goals, not just software metric targets.

4. Stop using software metrics that do not lead to change.

We all know that the process of repeating actions without change with the expectation of different results is the definition of insanity. But repeating the same work without adjustments that do not achieve goals is the definition of managing by metrics.

Why would software developers keep doing something that is not getting them closer to goals such as better software experiences? Because they are focusing on software metrics that do not measure progress toward that goal.

Some software metrics have no value when it comes to indicating software quality or team workflow. Management and software development teams need to work on software metrics that drive progress towards goals and provide verifiable, consistent indicators of progress.

Examples of Software Metrics

There is no standard or definition of software metrics that have value to software development teams. And software metrics have different value to different teams. It depends on what are the goals for the software development teams.

As a starting point, here are some software metrics that can help developers track their progress.

Agile process metrics

Agile process metrics focus on how agile teams make decisions and plan. These metrics do not describe the software, but they can be used to improve the software development process.

Lead time

Lead time quantifies how long it takes for ideas to be developed and delivered as software. Lowering lead time is a way to improve how responsive software developers are to customers.

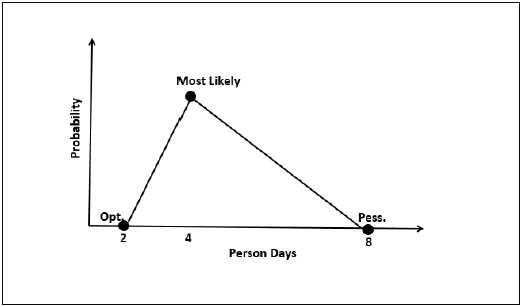

Screenshot via Pearsoned.co.uk

Cycle time

Cycle time describes how long it takes to change the software system and implement that change in production.

Team velocity

Team velocity measures how many software units a team completes in an iteration or sprint. This is an internal metric that should not be used to compare software development teams. The definition of deliverables changes for individual software development teams over time and the definitions are different for different teams.

Open/close rates

Open/close rates are calculated by tracking production issues reported in a specific time period. It is important to pay attention to how this software metric trends.

Production

Production metrics attempt to measure how much work is done and determine the efficiency of software development teams. The software metrics that use speed as a factor are important to managers who want software delivered as fast as possible.

Active days

Active days is a measure of how much time a software developer contributes code to the software development project. This does not include planning and administrative tasks. The purpose of this software metric is to assess the hidden costs of interruptions.

Assignment scope

Assignment scope is the amount of code that a programmer can maintain and support in a year. This software metric can be used to plan how many people are needed to support a software system and compare teams.

Efficiency

Efficiency attempts to measure the amount of productive code contributed by a software developer. The amount of churn shows the lack of productive code. Thus a software developer with a low churn could have highly efficient code.

Code churn

Code churn represents the number of lines of code that were modified, added or deleted in a specified period of time. If code churn increases, then it could be a sign that the software development project needs attention.

Example Code Churn report, screenshot via Visual Studio

Impact

Impact measures the effect of any code change on the software development project. A code change that affects multiple files could have more impact than a code change affecting a single file.

Mean time between failures (MTBF) and mean time to recover/repair (MTTR)

Both metrics measure how the software performs in the production environment. Since software failures are almost unavoidable, these software metrics attempt to quantify how well the software recovers and preserves data.

Image via Wikimedia Commons

Application crash rate (ACR)

Application crash rate is calculated by dividing how many times an application fails (F) by how many times it is used (U).

ACR = F/U

Security metrics

Security metrics reflect a measure of software quality. These metrics need to be tracked over time to show how software development teams are developing security responses.

Endpoint incidents

Endpoint incidents are how many devices have been infected by a virus in a given period of time.

Mean time to repair (MTTR)

Mean time to repair in this context measures the time from the security breach discovery to when a working remedy is deployed.

Size-oriented metrics

Size-oriented metrics focus on the size of the software and are usually expressed as kilo lines of code (KLOC). It is a fairly easy software metric to collect once decisions are made about what constitutes a line of code. Unfortunately, it is not useful for comparing software projects written in different languages. Some examples include:

- Errors per KLOC

- Defects per KLOC

- Cost per KLOC

Function-oriented metrics

Function-oriented metrics focus on how much functionality software offers. But functionality cannot be measured directly. So function-oriented software metrics rely on calculating the function point (FP) — a unit of measurement that quantifies the business functionality provided by the product. Function points are also useful for comparing software projects written in different languages.

Function points are not an easy concept to master and methods vary. This is why many software development managers and teams skip function points altogether. They do not perceive function points as worth the time.

Errors per FP or Defects per FP

These software metrics are used as indicators of an information system’s quality. Software development teams can use these software metrics to reduce miscommunications and introduce new control measures.

Software Engineering

Defect Removal Efficiency (DRE)

Software Engineering Tutorial Ppt

The Defect Removal Efficiency is used to quantify how many defects were found by the end user after product delivery (D) in relation to the errors found before product delivery (E). The formula is:

DRE = E / (E+D)

The closer to 1 DRE is, the fewer defects found after product delivery.

With dozens of potential software metrics to track, it’s crucial for development teams to evaluate their needs and select metrics that are aligned with business goals, relevant to the project, and represent valid measures of progress. Monitoring the right metrics (as opposed to not monitoring metrics at all or monitoring metrics that don’t really matter) can mean the difference between a highly efficient, productive team and a floundering one. The same is true of software testing: using the right tests to evaluate the right features and functions is the key to success. (Check out our guide on software testing to learn more about the various testing types.)

While the process of defining goals, selecting metrics, and implementing consistent measurement methods can be time-consuming, the productivity gains and time saved over the life of a project make it time well invested. Various software metrics are incorporated into solutions such as application performance management (APM) tools, along with data and insights on application usage, code performance, slow requests, and much more. Retrace, Stackify’s APM solution, combines APM, logs, errors, monitoring, and metrics in one, providing a fully-integrated, multi-environment application performance solution to level-up your development work. Check out Stackify’s interview with John Sumser with HR Examiner, and one of Forbes Magazine’s 20 to Watch in Big Data, for more insights on DevOps and Big Data.

Additional Resources and Tutorials

Because there is little standardization in the field of software metrics, there are many opinions and options to learn more.

- Software Metrics (Class notes from the MIT class “Software Engineering Concepts”

- Guide to Advanced Empirical Software Engineering (Forrest Shull, Janice Singer, Dag I. K. Sjøberg; Springer Science & Business Media)

- What are Microservices? Code Examples, Best Practices, Tutorials and More- September 13, 2019

- 9 Best Practices to Handle Exceptions in Java- August 22, 2019

- Stackify Named to Constellation ShortList for Digital Performance Management- August 19, 2019

- Stackify founder: Landing on Inc. 5000 brings validation, prompts reflection- August 16, 2019

- Inc. 5000 ranks Matt Watson’s Stackify among top fastest-growing companies in KC- August 15, 2019